News

Google Catches Up to Apple with Augmented Reality Kit for Mobile Apps

- By David Ramel

- August 30, 2017

Google is catching up with arch-rival Apple in the mobile augmented reality space (popularized by Pokémon GO), announcing ARCore to keep pace with Apple's ARKit, unveiled in June.

Offered as an early preview SDK, ARCore can be used in development environments such as Android Studio, Unity and Unreal. Along with SDKs for those tools, Google has published guides, APIs and set up a forum where developers can connect, ask questions and discuss issues.

In its promotional materials, Google has been emphasizing the ability of ARCore -- which builds upon the company's Tango project) to operate "at Android scale."

"We've been developing the fundamental technologies that power mobile AR over the last three years with Tango, and ARCore is built on that work," engineering exec Dave Burke said in a blog post yesterday. "But, it works without any additional hardware, which means it can scale across the Android ecosystem. ARCore will run on millions of devices, starting today with the Pixel and Samsung's S8, running 7.0 Nougat and above. We're targeting 100 million devices at the end of the preview. We're working with manufacturers like Samsung, Huawei, LG, ASUS and others to make this possible with a consistent bar for quality and high performance."

Apple, meanwhile, got about a three-month head start with ARKit.

"iOS 11 introduces ARKit, a new framework that allows you to easily create unparalleled augmented reality experiences for iPhone and iPad," Apple said at the time. "By blending digital objects and information with the environment around you, ARKit takes apps beyond the screen, freeing them to interact with the real world in entirely new ways."

[Click on image for larger view.]

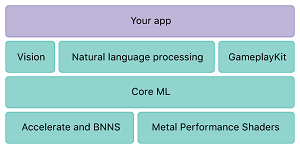

Core ML, the Foundation of ARKit (source: Apple)

[Click on image for larger view.]

Core ML, the Foundation of ARKit (source: Apple)

Google says its new ARCore uses three key technologies:

- Motion tracking allows the phone to understand and track its position relative to the world.

- Environmental understanding allows the phone to detect the size and location of flat horizontal surfaces like the ground or a coffee table.

- Light estimation allows the phone to estimate the environment's current lighting conditions.

[Click on image for larger, Animated GIF.]

ARCore in Animated Action (source: Google)

[Click on image for larger, Animated GIF.]

ARCore in Animated Action (source: Google)

"Fundamentally, ARCore is doing two things: tracking the position of the mobile device as it moves, and building its own understanding of the real world," Google said. ARCore's motion tracking technology uses the phone's camera to identify interesting points, called features, and tracks how those points move over time. With a combination of the movement of these points and readings from the phone's inertial sensors, ARCore determines both the position and orientation of the phone as it moves through space.

"In addition to identifying key points, ARCore can detect flat surfaces, like a table or the floor, and can also estimate the average lighting in the area around it. These capabilities combine to enable ARCore to build its own understanding of the world around it."

Google warned that, as an early preview, ARCore may be subject to breaking changes in future iterations. In the meantime, it asks interested developers to begin experimenting with it and providing feedback on the early-stage APIs.

About the Author

David Ramel is an editor and writer at Converge 360.