News

Talend Promises 10 Minutes to Big Data with New Sandbox

- By David Ramel

- July 17, 2014

The Talend Big Data Sandbox aims to quicken the adoption of large-scale analytics, promising "zero to Big Data without coding in under 10 minutes."

The ready-to-run virtual environment combines the Talend Platform for Big Data with an Apache Hadoop distribution from Cloudera Inc., Hortonworks Inc. or Map4 Technologies Inc., the data integration company said yesterday.

To help developers get started quickly, Talend said it's including a Big Data Insights Cookbook with four ready-to-go scenarios: clickstream analysis; social media sentiment analysis; log stream analysis of Apache weblogs; and extract, transform and load (ETL) offloading with Hadoop. The cookbook also comes with video tutorials.

"Big Data developers are scarce and can be very costly," said Talend exec Fabrice Bonan. "Talend enables current data integration developers to quickly connect, transform and manage diverse structured and unstructured data sources without any MapReduce programming, using graphical Eclipse-based tools to generate optimized code."

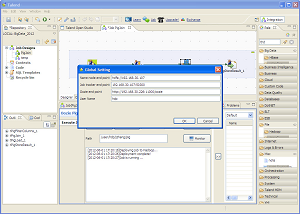

[Click on image for larger view.]

Using the Talend Big Data Platform

[Click on image for larger view.]

Using the Talend Big Data Platform

(source: Talend)

The Eclipse-based tools can generate Java, MapReduce, Pig or HiveQL code. Developers can build complex transformations and code with multiple languages, such as Pig Latin, Sqoop, MapReduce/YARN, HiveQL and Java.

The sandbox comes with built-in connectivity to more than 800 pre-built data source including Big Data systems, such as Hive, HBase and NoSQL databases. Developers also get access to online video tutorials and the open Talend online community, the company said.

Interested developers can download a 30-day trial of the sandbox.

As the Talend Platform for Big Data is included with the sandbox, users must be running Oracle VirtualBox 4.2 or higher; VMware Fusion 5.0 or higher (on Macs); or VMware Player (for Windows), along with at least 6 GB of disk space and 8 GB of RAM.

About the Author

David Ramel is an editor and writer at Converge 360.