On JavaScript Performance Wars

The interminable JavaScript engine performance wars are continuing, with Microsoft landing the latest blow.

Striking this time was Limin Zhu, program manager for the Chakra JavaScript engine that powers the new Microsoft Edge Web browser.

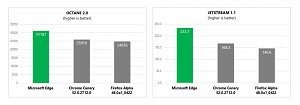

"Let's look at where Microsoft Edge stands at the moment, as compared to other major browsers on two established JavaScript benchmarks maintained by Google and Apple respectively," Zhu said in a blog post this week. "The results below show Microsoft Edge continuing to lead both benchmarks."

[Click on image for larger view.]

Microsoft's Results (source: Microsoft)

[Click on image for larger view.]

Microsoft's Results (source: Microsoft)

Those benchmarks are Octane 2.0 and JetStream 1.1. And the results indeed show Edge has an edge over recent versions of Chrome Canary and Firefox Alpha. Zhu delves deeply into the code tweaks that result in superior performance, providing nitty-gritty details that might interest hard-core JavaScript jockeys. They have to do with "deferred parsing for event-handlers and the memory optimizations in FunctionBody" and other technical minutiae.

Zhu said the code tweaks, claimed to reduce memory footprint and start-up time, will be reflected in the Edge browser in the upcoming Windows 10 Anniversary Update.

However, as with all benchmark tests, the results are subject to interpretation and can conflict with other measurements.

For example, just last month, Red Hat published a JavaScript Engine & Performance Comparison (V8, Chakra, Chakra Core) that concluded "V8 performs slightly better than Chakra and Chakra Core" using the same Octane 2.0 benchmark. Of course, different things were measured, and the Red Hat study didn't reflect Microsoft's latest performance tweaks, and further enhancements will continue on all JavaScript engines, so the question of the best-performing browser engine remains.

Perhaps a more important question is the value of these benchmark tests to real-world users or even Web developers. Do they really provide much value? Even the testers themselves are quick to quote caveats.

"Benchmarks are an incomplete representation of the performance of the engine and should only be used for a rough gauge," Red Hat warned. "The benchmark results may not be 100 percent accurate and may also vary from platforms to platforms."

Microsoft also offered a disclaimer.

"All of our performance efforts are driven by data from the real-world Web and aim to enhance the actual end user experience," Zhu said. "However, we are still frequently asked about JavaScript benchmark performance, and while it doesn't always correspond directly to real-world performance, it can be useful at a high level and to illustrate improvement over time."

Over time, of course, benchmark results and superiority claims will change.

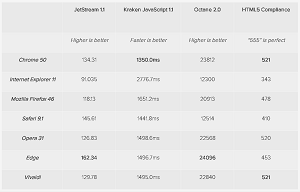

Three weeks ago, Digital Trends published its own tests, comparing Chrome 50, Edge and five other browsers across four benchmarks, including JetStream 1.1 and Octane 2.0. Those results are similar to Microsoft's, with Edge leading the JetStream and Octane benchmarks, but Chrome 50 leading in the Kraken JavaScript 1.1 and HTML5 Compliance benchmarks (in a tie with Vivaldi for the latter). The other browsers tested were IE 11, Mozilla Firefox 4, Safari 9.1 and Opera 31.

[Click on image for larger view.]

Digital Trends' Results (source: Digital Trends)

[Click on image for larger view.]

Digital Trends' Results (source: Digital Trends)

"The JetStream benchmark, which focuses on modern Web applications, has a surprising winner: Edge," Digital Trends said. "Microsoft's been working hard on optimizing its new browser, and it shows. Safari, Chrome, and Vivaldi aren't too far behind, though.

"Two JavaScript benchmarks, Mozilla's Kraken benchmark and Google's Octane 2.0, give us more split results. Edge just barely beat Chrome on Octane 2.0, while Chrome came out ahead on the Kraken test. The results suggest most modern browsers are pretty fast, however, with the exception of Internet Explorer 11. This suggests that Microsoft's Edge is a huge leap forward from its old browser, and that competition in the browser market is pretty tight overall."

Indeed, the market seems tight, the results seem close, and the question of relevance remains.

"A casual user probably won't notice a difference in the rendering speed between today's modern browsers," Digital Trends said. (Note: Digital Trends also reported on this week's kerfuffle between Microsoft and Opera about browser battery use).

Microsoft itself called into question the usefulness of benchmark tests in a 2010 research note comparing "the behavior of JavaScript Web applications from commercial Web sites ... with the benchmarks."

The Microsoft research concluded "benchmarks are not representative of many real Web sites and that conclusions reached from measuring the benchmarks may be misleading."

Prominent JavaScript guru and author Douglas Crawford took the Microsoft research conclusion to heart and did his own comparison using JSLint. It showed Chrome 13.0.754.0 canary performed better than an older Chrome version and other browsers including Firefox, IE, Opera and Safari (this was before the advent of IE):

JavaScript Performance

(Smaller is better)

| Browser |

Seconds |

| Chrome 10.0.648.205 |

2.801 |

| Chrome 13.0.754.0 canary |

0.543 |

| Firefox 4.0.1 |

0.956 |

| IE 9.0.8112.16421 64 |

1.159 |

| IE 10.0.1000.16394 |

0.562 |

| Opera 11.10 |

1.106 |

| Safari 5.0.5 (7533.21.10) |

0.984 |

Noting that the Microsoft research debunked the then-available benchmarks, Crawford said the study "showed that benchmarks are not representative of the behavior of real Web applications. But lacking credible benchmarks, engine developers are tuning to what they have. The danger is that the performance of the engines will be tuned to non-representative benchmarks, and then programming styles will be skewed to get the best performance from the mistuned engines."

So why do the benchmark tests keep getting published, caveats and all, some six years later?

I don't know, but it makes good copy for an end-of-the-week tech blog.

Do JavaScript benchmark tests help Web developers fine-tune their programming to deliver the best possible experience to users? Comment here or drop me a line.

Posted by David Ramel on June 24, 2016